|

2/14/2024 0 Comments Swish activation function vs relu

But when I used a learning rate of 0.02 with swish(), I got essentially the same results. Compared to the NN with tanh() and a learning rate of 0.01, the swish() version learned a bit slower.

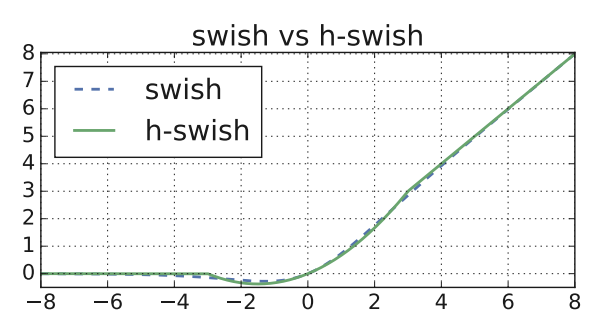

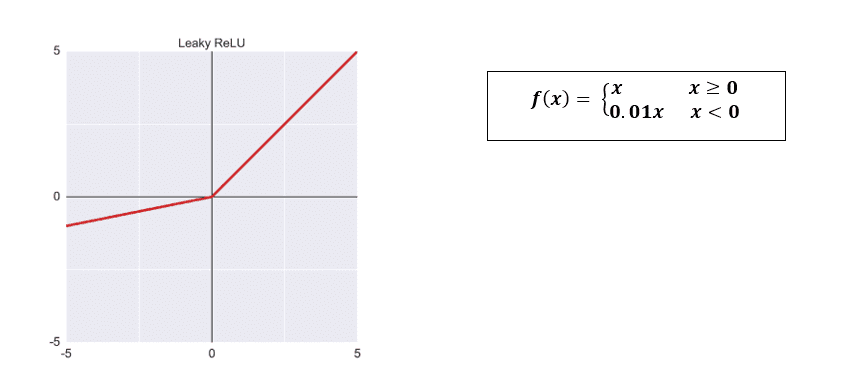

I took an existing 6-(10-10)-3 classifier I had, which used tanh() on the two hidden layers, and replaced tanh() with swish(). The demo run on the right uses swish() activation with a LR = 0.02. The demo run on the left uses tanh() activation with a LR = 0.01. So, adding what are essentially unnecessary functios to PyTorch can have a minor upside. But if swish() had been in PyTorch I would have discovered it earlier. Adding such a trivial function just bloats a large library even further. The fact that PyTorch doesn’t have a built-in swish() function is interesting. Update: I just discovered that PyTorch 1.7 does have a built-in swish() function. Z = self.oupt(z) # no softmax for multi-class # z = T.tanh(self.hid1(x)) # replace tanh() w/ swish() However, it’s trivial to implement inside a PyTorch neural network class, for example: At the time I’m writing this bog post, Keras and TensorFlow have a built-in swish() function (released about 10 weeks ago), but the PyTorch library does not have a swish() function. The Wikipedia entry on swish() points out that swish() is sometimes called sil() or silu() which stands for sigmoid-weighted linear unit. The three related activation functions are: It’s sort of a cross between logistic sigmoid() and relu(). I made this graph of sigmoid(), swish(), and relu() using Excel. The swish() function was devised in 2017. Many variations of relu() followed but none were consistently better so relu() has been used as a de facto default since about 2015. Then relu() was found to work better for deep neural networks. In the early days of NNs, logistic sigmoid() was the most common activation function. I don’t know Thorsten personally, but he seems like a very bright and creative guy.

My thanks to fellow ML enthusiast Thorsten Kleppe for pointing swish() out to me when he mentioned the similarity between swish() and gelu() in a Comment to an earlier post. I was recently alerted to the new swish() activation function for neural networks. Swish is much more complicated than ReLU (when weighted against the small improvements that are provided) so it might not end up with as strong an adoption as ReLU.It’s very difficult, but fun, to keep up with all the new ideas in machine learning.A smooth landscape should be more traversable and less sensitive to initialization and learning rates. Since the Swish function is smooth, the output landscape and the loss landscape are also smooth. Gating generally uses multiple scalar inputs but since self-gating uses a single scalar input, it can be used to replace activation functions which are generally pointwise.īeing unbounded on the x>0 side, it avoids saturation when training is slow due to near 0 gradients.īeing bounded below induces a kind of regularization effect as large, negative inputs are forgotten. Uses self-gating mechanism (that is, it uses its own value to gate itself). Swish-β can be thought of as a smooth function that interpolates between a linear function and RELU. The paper shows that Swish is consistently able to outperform RELU and other activations functions over a variety of datasets (CIFAR, ImageNet, WMT2014) though by small margins only in some cases. The paper presents a new activation function called Swish with formulation f(x) = x.sigmod(x) and its parameterised version called Swish-β where f(x, β) = 2x.sigmoid(β.x) and β is a training parameter.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed